Democratizing AI for Real-World Decisions

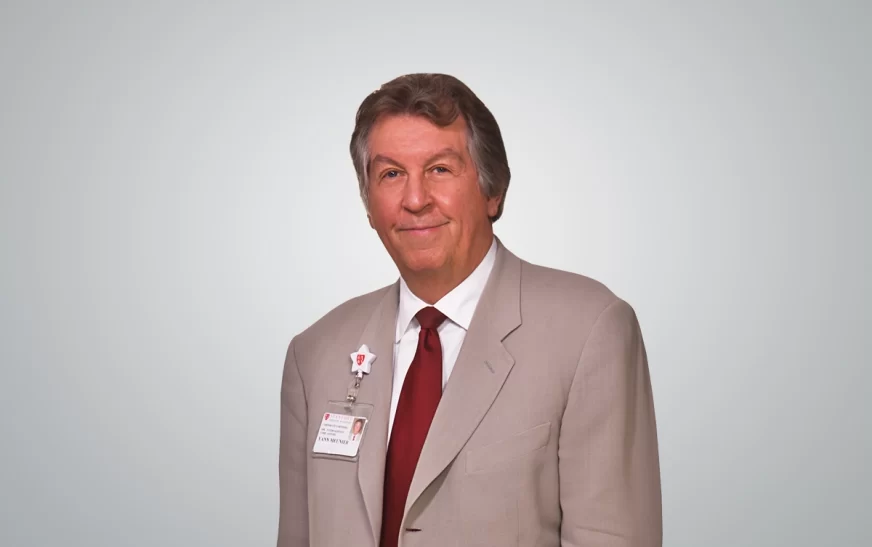

Enio Faria de Toledo Moraes

CEO

Movimento.ai

Democratizing AI for Real-World Decisions

Enio Faria de Toledo Moraes

CEO

Movimento.ai

Global spending on generative AI is growing faster than most industries can absorb. Yet the majority of enterprises keep hitting the same wall: they cannot move from a promising pilot to something that actually runs the business. Bad data structures, weak governance, and fragmented teams have made AI adoption as much a management problem as a technical one. Few executives have worked both sides of that problem as thoroughly as Enio Moraes. His career spans cybersecurity, AI systems, data governance, and enterprise transformation. He led the Nasdaq listing of Semantix, Brazil’s first AI company. Across it all, one priority has remained constant: ensuring innovation delivers real, accountable results rather than impressive slide decks. He spoke with TradeFlock about what enterprise AI adoption actually looks like from the inside.

What first pulled you decisively toward AI as your core focus?

The thing that stayed with me from my early years in digital transformation was not the technology. It was a pattern. It showed up in every organisation I worked with, across every industry. Leaders would agree that change was necessary. The ambition was real. The tools were available. But the moment you needed to turn that intent into an actual decision, something would break down. The connection between strategy and execution was missing.

AI did not fix that problem. It made it more urgent and harder to manage at the same time. Writing Diário da IA was my attempt to close that gap directly. What became clear in that process was that the real obstacle was not capability. It was language. Leaders did not have a shared vocabulary for engaging with AI in a practical and responsible way.

Everything I do now is pointed at that specific problem: making AI understandable enough that it can be used with intention, not just enthusiasm.

What gap in enterprise AI adoption led you to build Movimento.AI?

Over time, what became increasingly difficult to ignore was not the level of investment in AI, but the lack of clarity surrounding its application. Organisations were moving quickly to adopt platforms and tools, yet the fundamental questions—what problem is being solved, and whether the underlying data could sustain that solution in production—were often left insufficiently defined.

This created a structural disconnect. At the executive level, AI was positioned as a priority; within the organisation, the conditions required to operationalise it were still evolving. In practice, that meant AI initiatives frequently remained at the level of demonstration rather than becoming part of everyday decision-making.

I started Movimento.AI to address that exact gap. At the outset, the focus was not on introducing additional technology but on reorienting organisations so that AI becomes embedded in their operating logic. However, I soon found that the challenge, more often than not, lies in bridging the gap between a well-articulated strategy and a system that can function reliably in real-world conditions.

What legacy do you aim to leave in shaping AI adoption across Brazil and beyond?

Over the course of this work, the idea of legacy has shifted from technological advancement to accessibility. The concentration of AI capability within a limited set of organisations creates a structural imbalance, particularly in regions where access to specialised expertise is not readily available.

The initiatives around Diário da IA, academic programs at ESPM, and advisory work across sectors have been shaped by a consistent objective: to extend strategic understanding of AI to leaders who operate without dedicated AI teams. In that sense, geography should not determine participation, whether in São Paulo or in cities further removed from established innovation hubs.

If, over time, more organisations are able to engage with AI as an operational tool rather than an abstract concept, and do so with both clarity and intent, then the work will have achieved what it set out to do.

“Technology becomes dangerous when leadership mistakes visibility for readiness.”

What did taking a Brazilian AI company to Nasdaq teach you about building at global standards?

The process of taking Semantix to Nasdaq altered the frame through which I evaluate both technology and execution. Entering that environment removes any tolerance for abstraction; what matters is not what a system is designed to do, but what it can demonstrate under scrutiny. Every layer, from architecture to governance to data traceability, must hold together in a way that can be audited, not explained.

What made the process more complex was not only the technical validation but the need to reconcile two very different operating contexts. The expectations and pace of U.S. capital markets do not naturally align with the realities of the Brazilian technology ecosystem, whether in terms of talent availability, regulatory structure, or infrastructure maturity. Bridging that gap required constant adjustment rather than a fixed model.

The conclusion that remained is one I continue to return to: real AI is not a demo, it is an operational discipline.

Why do companies rush into Generative AI without safeguards, and how do you reset that thinking at the top?

The pace at which organisations are adopting generative AI is rarely a reflection of technical readiness; it is more often a response to external pressure. Competitive announcements, board expectations, and the need to signal progress create a sense of urgency that compresses decision-making timelines.

What tends to receive less attention in that process is the role of data governance. When generative models are deployed on poorly structured or inadequately protected data, the associated risks are no longer theoretical. They manifest through regulatory exposure, reputational damage, and, in some cases, direct financial penalties. In Brazil, LGPD-related consequences have already emerged in situations where governance frameworks lag behind adoption.

In discussions with executive teams, the conversation shifts when these implications are made explicit. The question then becomes less about speed and more about sustainability: do you want to be first to use AI, or do you want to use AI and still be in business two years from now? Governance, in this context, is not a constraint but the condition that allows AI to scale with confidence.